DeepSeek-V4 & GPT-5.5: AI Will Never Forget You Again

Article Summary

📖 9 min readThis article explores the paradigm shift brought by DeepSeek-V4's one-million-token context window for autonomous agents, contrasting with GPT-5.5's consolidation of coding capabilities. It highlights that the real gain lies in eliminating the re-contextualization cost, radically transforming AI-based workflows.

Key Points:

- DeepSeek-V4 offers a one-million-token context window, allowing AI agents to process the equivalent of seven novels in a single session.

- This massive contextual capacity eliminates the need for constant re-contextualization, reducing cognitive load for AI users.

- Autonomous agents can now ingest entire codebases, long project histories, or complete documentation without losing the thread.

- While DeepSeek-V4 innovates on context, GPT-5.5 focuses on improving and consolidating its coding capabilities.

- The real impact of these advances lies in transforming concrete workflows for developers, freelancers, and teams that rely on AI.

- Eliminating the re-contextualization cost is the fundamental gain, enabling smoother and more efficient interaction with artificial intelligences.

One million tokens. Let that number sink in.

That’s the context window DeepSeek-V4 packs for autonomous agents. One million tokens is approximately 750,000 words — the equivalent of seven average-sized novels that the model can read, analyze, and reason over in a single session. Compare that to the 8,000 tokens of early GPT-4, and you understand why we’re talking about a paradigm shift, not a routine update.

Meanwhile, OpenAI is consolidating its coding capabilities in GPT-5.5. Not a disruptive new model — a consolidation. And that’s precisely where the analysis gets interesting. Because while everyone is watching the benchmarks, the real stakes are playing out elsewhere: in how these models will transform the concrete workflows of developers, freelancers, and teams that rely on AI every day.

What “massive context” really means in the field

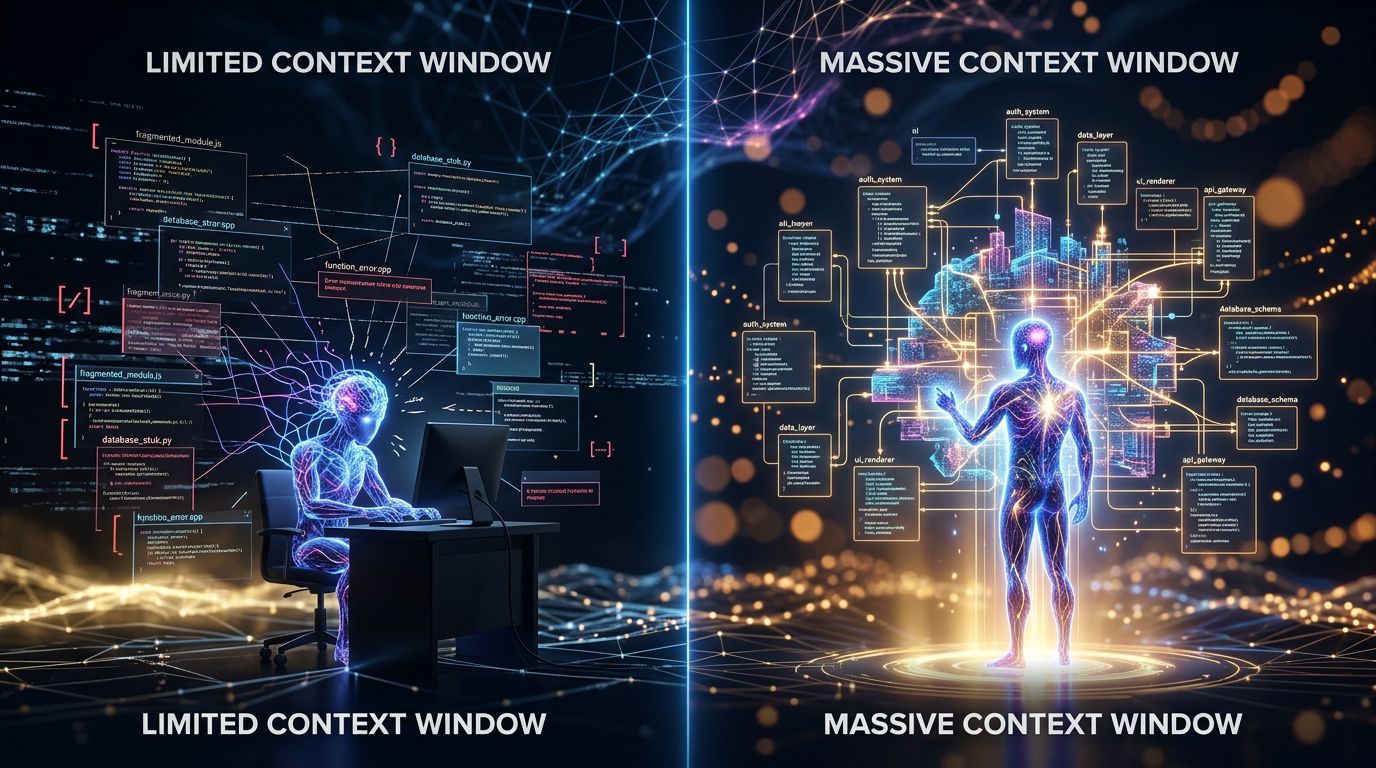

Here’s where it gets juicy. Most articles about DeepSeek-V4 stop at the number. One million tokens, wow, impressive, moving on. What they never tell you is what it concretely changes in an autonomous agent workflow.

An AI agent with 8,000 tokens of context is like a consultant who reads the first page of every document before your meeting. He can pretend to follow along, but the moment you dig deeper, he loses the thread. You have to re-explain. Re-contextualize. Compensate for his amnesia with your own cognitive load.

An agent with one million tokens of context is different. It can ingest an entire medium-sized codebase, all exchanges from a six-month project, the complete documentation of an API, and still have room to spare. It doesn’t lose the thread — because there’s no thread to lose. The context is there, complete, available.

The real gain isn’t memory. It’s the elimination of the re-contextualization cost.

GPT-5.5 and coding: consolidation as strategy

Let’s flip the perspective to OpenAI. Everyone expected a revolution, they delivered a consolidation. First reaction: disappointment. Second reaction, after reflection: it might actually be smarter.

GPT-5.5 isn’t trying to reinvent AI coding. It’s trying to make it reliable. The difference is fundamental. Previous coding models could produce brilliant code in 80% of cases and catastrophically wrong code in the remaining 20% — without you being able to tell the difference before testing. This variance is the real problem in production.

What we observe with GPT-5.5 is a reduction in this variance. Fewer hallucinations on APIs. Better consistency on long refactoring tasks. More reliable completion on complex patterns. It’s not spectacular to demonstrate in a YouTube demo. It’s critical for a developer shipping to production.

My analysis reveals an underlying trend: AI labs have understood that their professional users are no longer looking for models that impress — they’re looking for models they can rely on. Reliability is the new benchmark.

“The best tool is not the most powerful one. It’s the one you can trust.” — a foundational principle in software engineering, truer than ever applied to AI.

Autonomous agents: what massive contexts really unlock

Experience has taught me that AI agents rarely fail on isolated tasks. They fail on coordination. On maintaining consistency between decisions made at different moments in a long workflow. On the ability to “remember” that the decision made at step 3 has implications for what we do at step 47.

DeepSeek-V4 attacks this problem directly. With one million tokens available, an agent can maintain a coherent representation of a complex project from start to finish — without having to compress, summarize, or truncate the decision history. This is not a technical detail. It’s the difference between an agent that executes tasks and an agent that manages a project.

Three use cases that become realistic with this level of context:

- Full codebase refactoring: the agent sees all dependencies, all patterns, all architectural decisions simultaneously. No more partial refactoring that breaks what it couldn’t see.

- Contract analysis and due diligence: ingesting 500 pages of legal documents, identifying contradictions, risky clauses, inconsistencies — in a single pass.

- Long-term project management: an agent that follows a project over several weeks without losing the context of initial decisions.

What this changes for freelancers and teams

Let’s look at this from another angle. These advances aren’t just news for ML researchers or Google engineers. They have direct implications for anyone using AI as a daily work tool.

For the freelancer managing ten clients in parallel, the challenge isn’t the raw power of the model — it’s the AI’s ability to maintain a separate and coherent context for each client, without confusion, without resetting. Massive contexts enable more sophisticated memory architectures. Systems like pgvector paired with large-context models can maintain a rich representation of each client relationship — full history, preferences, ongoing projects, past decisions.

For an agency managing complex campaigns, the ability to have a single coherent agent analyze an entire brief, all existing assets, and performance history — without artificial splitting — changes the quality of outputs.

For solo developers or small teams, a consolidated GPT-5.5 means less time spent verifying and correcting generated code. If the model is 20% more reliable on complex patterns, that’s 20% of review time saved. Over a 40-hour week, that’s measurable.

What nobody tells you in new model announcements: the real ROI isn’t in peak performance. It’s in reducing daily friction.

The real challenge: integration, not adoption

But beware the trap. The availability of a one-million-token model doesn’t magically solve integration problems. A massive unstructured context is still a massive unstructured context. Giving an agent access to one million tokens of unorganized data is like giving a consultant access to an entire room of filing cabinets with no index.

The real skill that emerges here is context architecture. How do you structure information so the agent can navigate it efficiently? How do you prioritize what needs to be in active context versus what can be retrieved via semantic search? How do you avoid signal dilution in noise when the window is massively wide?

These are technical questions, but they have practical answers. Stacks that combine native large context (for reasoning coherence) with precise vector retrieval (for relevance) are starting to show measurable results. This is no longer research — it’s workflow engineering.

“A tool is only as powerful as you know how to integrate it.” — an obvious truth the AI industry rediscovers with every generation of models.

Three actionable insights to take away

1. Evaluate your workflows on the re-contextualization criterion. How much time do you spend each week re-explaining context to your AI? If the answer exceeds 30 minutes, you have an architecture problem, not a model problem.

2. Reliability before power. For production use cases — code, contracts, client communications — choose the most reliable model, not the most impressive on benchmarks. A consolidated GPT-5.5 can beat a more “powerful” model if its variance is lower.

3. Start thinking in terms of agents, not assistants. The difference: an assistant answers your questions. An agent takes initiatives, maintains an objective over time, and manages complexity without constant intervention. Massive contexts make this distinction concrete for the first time.

Memory is no longer a luxury

Impact. That’s the right word to summarize what DeepSeek-V4 and GPT-5.5 signal together.

The era of amnesiac AI — where you re-explained your clients at every session, where you artificially split your projects to fit within the context window, where you compensated for the model’s amnesia with your own cognitive overload — that era is ending. Not in five years. Now.

What remains to be built is the infrastructure that truly exploits these capabilities. Systems with persistent memory, structured context, agents that work in the background and report when relevant. Tools that know your clients as well as you do — and don’t reset that knowledge at every conversation.

That’s exactly what we’re working on with Nova-Mind. Permanent memory via pgvector, proactive agents, client context that persists and enriches over time. If you’re a freelancer, solopreneur, or small agency and you’re tired of managing the amnesia of your AI tools, now is the right time to try it. The models are finally up to the task. So is the infrastructure.

The question is no longer “can AI remember this?” The question is “do you have a system that allows it to?”